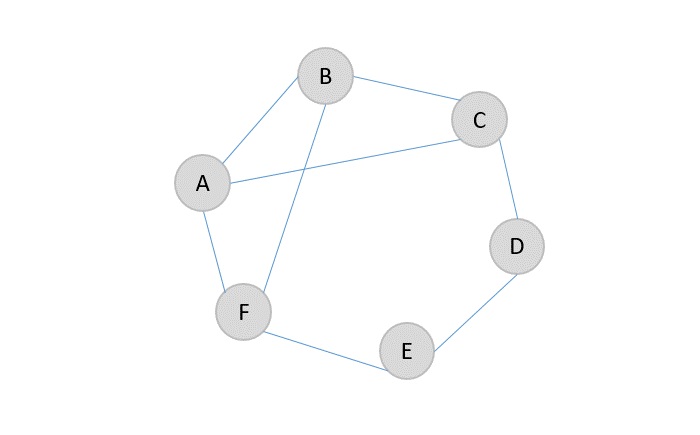

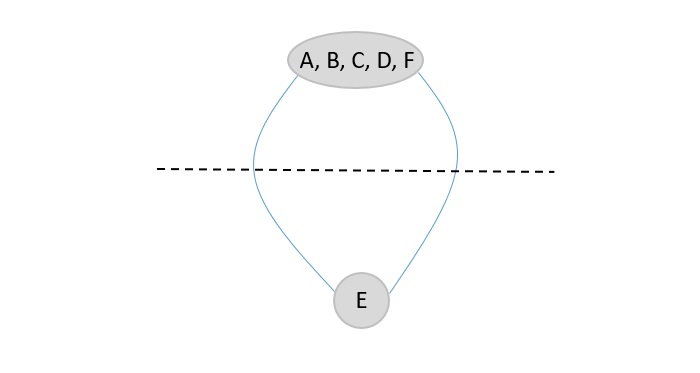

A maximum bipartite graph is a type of bipartite graph that has the highest possible number of edges between two sets of vertices.

A bipartite graph is a graph where the set of vertices can be divided into two groups, such that no two vertices within the same group are directly connected. The maximum bipartite graph is one where every vertex in the first group is connected to every vertex in the second group.

If one group has m vertices and the other has n vertices, the total number of edges in the maximum bipartite graph is:

Emax = m n

Properties of Maximum Bipartite Graphs

Maximum bipartite graphs have the following properties:

- It has two separate groups of vertices, and edges only connect vertices from different groups.

- The total number of edges is the maximum possible, which is the product of the number of vertices in each group.

- Since there are no connections within the same group, it never contains odd-length cycles.

- The graph can always be colored using just two colorsone for each group of vertices.

- Maximum bipartite graphs are useful in real-world applications like job assignments, networking, and resource allocation.

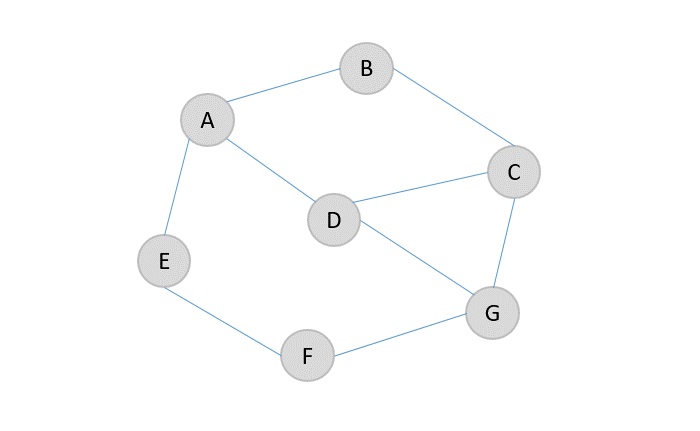

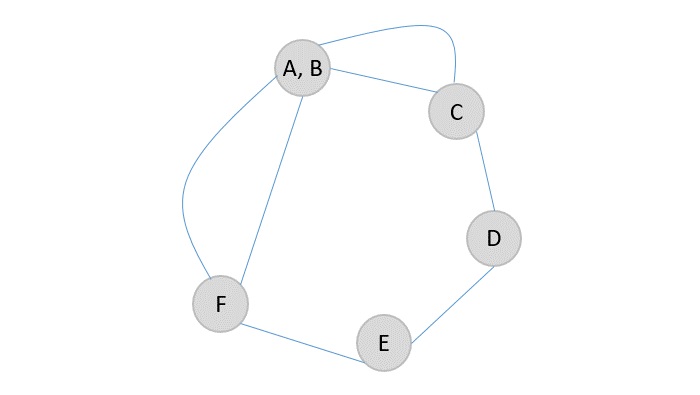

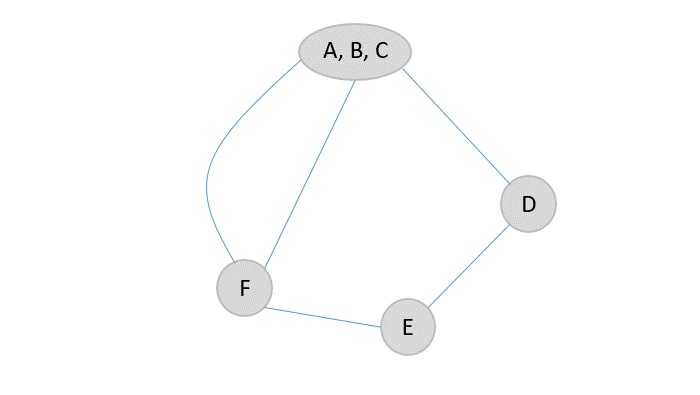

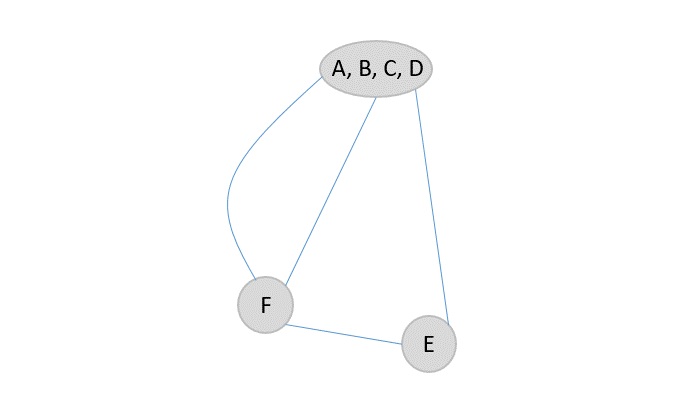

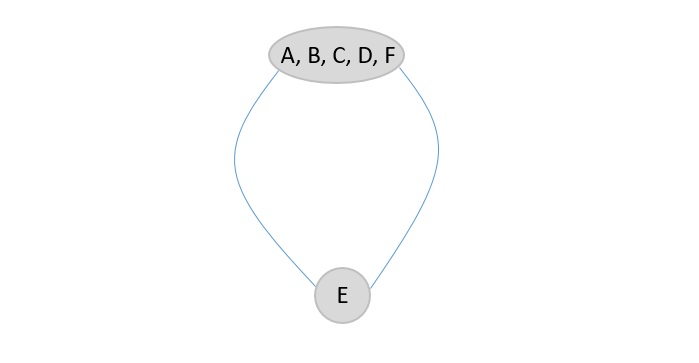

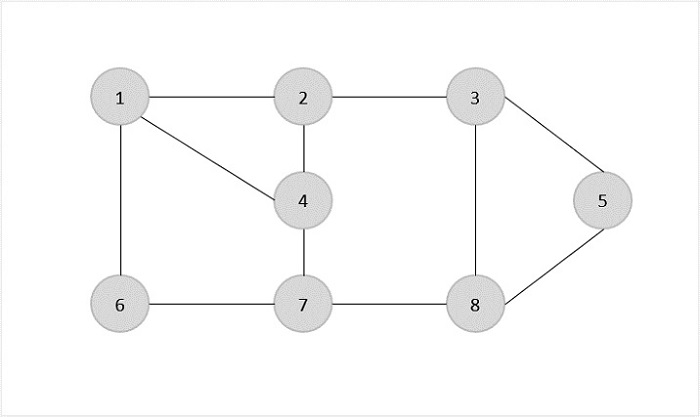

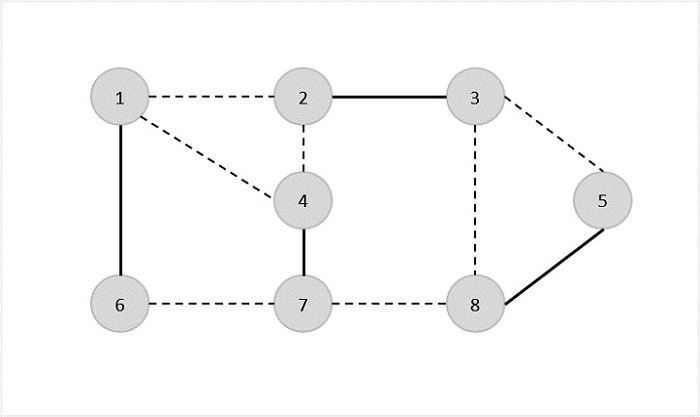

Example of Maximum Bipartite Graph

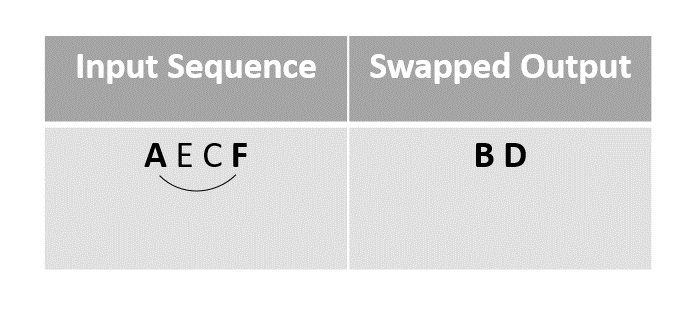

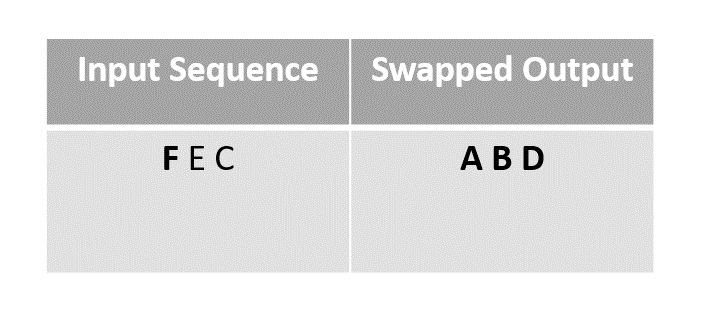

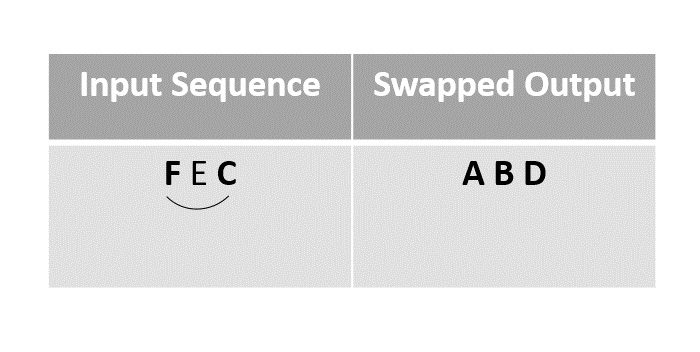

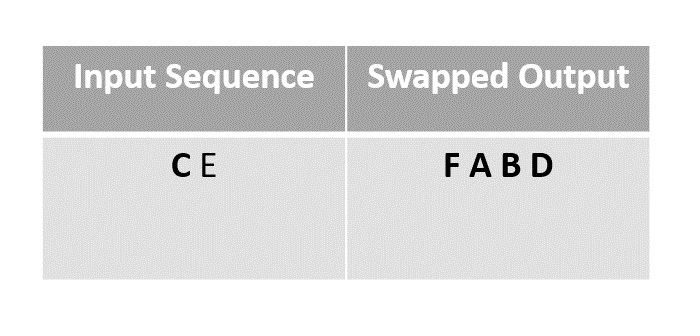

Consider the following bipartite graph with four vertices:

A -- B | | C -- D

In this example, the graph has two groups of vertices: {A, C} and {B, D}. The maximum bipartite graph would have the following edges:

- A — B

- A — D

- C — B

- C — D

There are four edges in total, which is the maximum possible for this graph.

Algorithms for Maximum Bipartite Matching

There are several algorithms to find the maximum bipartite matching in a graph:

- Hopcroft-Karp Algorithm

- Ford-Fulkerson Algorithm

- Hungarian Algorithm

- Edmonds-Karp Algorithm

- Augmenting Path Algorithm

- Network Flow Algorithm

Code to Find Maximum Bipartite Matching using Hopcroft-Karp Algorithm

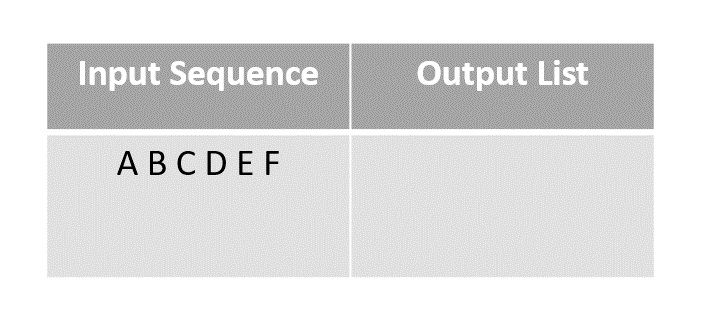

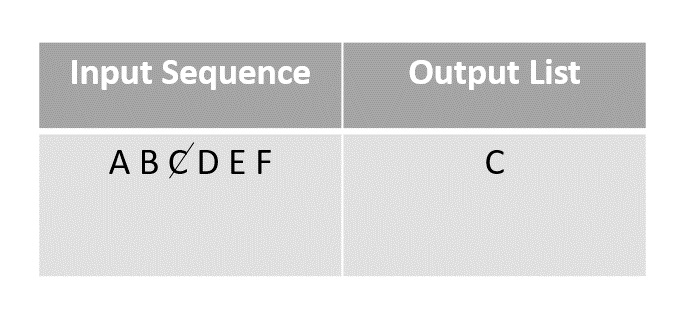

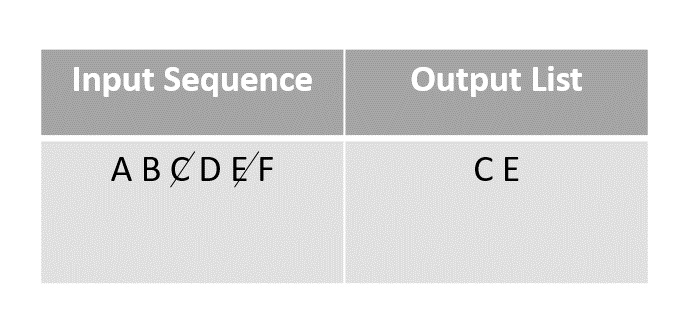

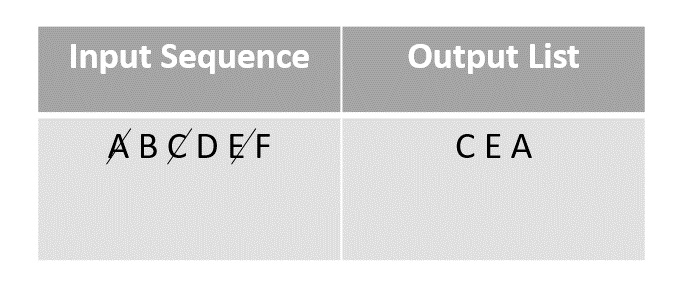

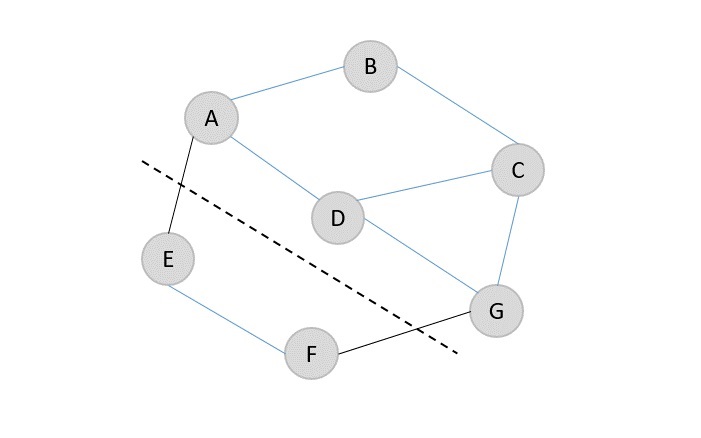

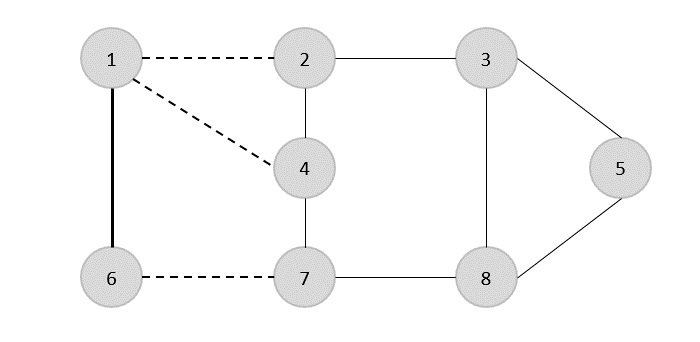

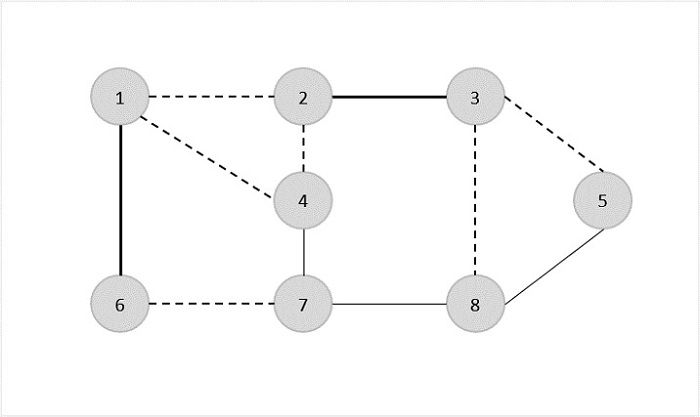

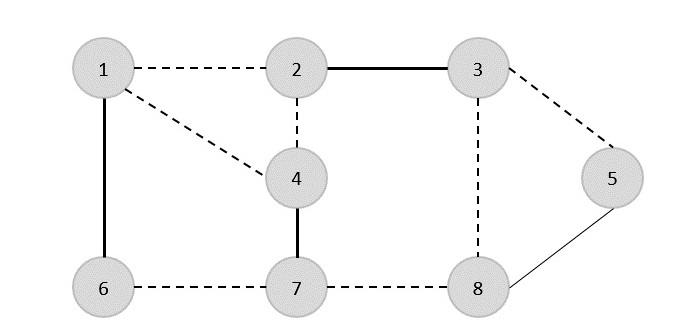

Now, let’s look at the steps to find the maximum bipartite matching in a graph using the Hopcroft-Karp algorithm:

1. Create a bipartite graph with two sets of vertices.

2. Initialize an empty matching between the two sets.

3. While there is an augmenting path in the graph:

a. Find an augmenting path using a matching algorithm.

b. Update the matching by adding or removing edges along the path.

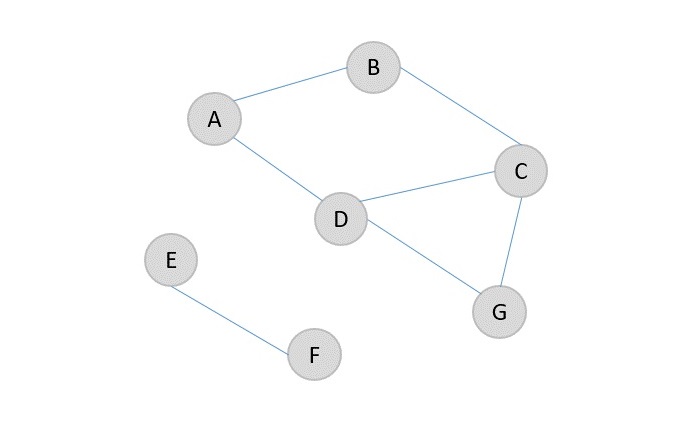

4. Return the maximum matching found.

Let’s look at an example of code to find the maximum bipartite matching in a graph using the Hopcroft-Karp algorithm:

// C program to find maximum matching in a bipartite graph#include <stdio.h>#include <stdbool.h>#define M 6#define N 6

bool bpm(int bpGraph[M][N],int u, bool seen[],int matchR[]){for(int v =0; v < N; v++){if((bpGraph[u][v]&&!seen[v])){

seen[v]= true;if((matchR[v]<0||bpm(bpGraph, matchR[v], seen, matchR))){

matchR[v]= u;return true;}}}return false;}intmaxBPM(int bpGraph[M][N]){int matchR[N];for(int i =0; i < N; i++)

matchR[i]=-1;int result =0;for(int u =0; u < M; u++){

bool seen[N];for(int i =0; i < N; i++)

seen[i]= false;if(bpm(bpGraph, u, seen, matchR))

result++;}return result;}intmain(){int bpGraph[M][N]={{0,1,1,0,0,0},{1,0,0,1,0,0},{0,0,1,0,0,0},{0,0,1,1,0,0},{0,0,0,0,0,0},{0,0,0,0,0,1}};printf("Maximum matching is %d\n",maxBPM(bpGraph));return0;}

Output

Maximum matching is 5

Applications of Maximum Bipartite Matching

Maximum bipartite matching algorithms have several real-world applications:

- Job Assignments: Assigning tasks to workers based on their skills and availability.

- Networking: Routing data packets through a network to optimize traffic flow.

- Resource Allocation: Allocating resources like time, money, or equipment to maximize efficiency.

- Marriage Matching: Pairing individuals based on preferences and compatibility.

- Project Planning: Scheduling tasks and dependencies to complete projects on time.

These applications demonstrate the importance of maximum bipartite matching algorithms in solving complex optimization problems.

Time Complexity of Maximum Bipartite Matching

The time complexity of finding the maximum bipartite matching in a graph using the Hopcroft-Karp algorithm is O(sqrt(V) * E), where V is the number of vertices and E is the number of edges in the graph. This algorithm is efficient for sparse graphs with a large number of vertices.

Conclusion

In this tutorial, we learned about maximum bipartite graphs and how to find the maximum bipartite matching in a graph using various algorithms. We also explored the properties and applications of maximum bipartite graphs in real-world scenarios. By understanding these concepts, you can solve a wide range of optimization problems efficiently.